|

6/4/2023 0 Comments Csv to aws postgresql

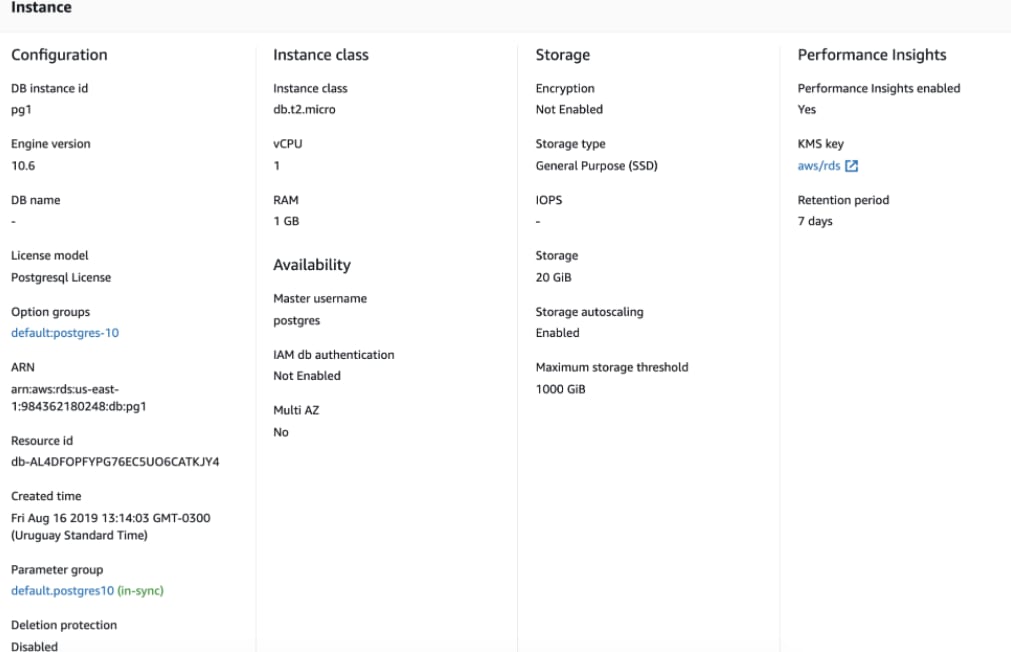

It throwing error of ERROR Executor: Exception in task 0.0 in stage 4.0 (TID 3) option("insertStatement", "INSERT INTO customerlocations (LOCATIONID, CUSTOMERNUMBER, CUSTOMERCOUNTRY, CUSTOMERSTATE, CUSTOMERDISTRICT, CUSTOMERCITY, SERIALNO, CORPID, INDATE, CUSTOMERMOB2, DISTRIID) VALUES (?, ?, ?, ?, ?, ?, ?, ?, ?, ?, ?) ON CONFLICT (CUSTOMERNUMBER) DO NOTHING") \ option("upsertKe圜ols", "customernumber") \ option("rewriteBatchedStatements", "true") \ option("dbtable", "customerlocations") \ This is my table structure and have same columns dataframe in spark, i want to write this dataframe to jdbc but whenever the customernumber violation error occur i have to do nothing and insert remaining rows so that i am writing like this customerlocationDF.write \ CREATE TABLE IF NOT EXISTS Customerlocations( If a duplicate key is found in the customernumber column, I want it to be ignored and the non-duplicate records to be inserted without failing the Spark job. What I need is for the Spark job to read the CSV file, process it, and then append the data to the PostgreSQL table. However, I'm getting a constraint violation error because the customernumber column in the customerlocation table is unique. I then create a DataFrame and load it to a PostgreSQL database via JDBC. I have a project in which I am reading a CSV file from Amazon S3 and performing data processing using Spark.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed